RDF vs Property Graphs – Which One Should You Use?

The RDF vs Property Graphs debate has been going on for years – and most of the comparisons you’ll find online read like marketing materials. One side comes from someone in the semantic web community who sees property graphs as a simple approach. The other side is from someone in the Neo4j ecosystem who views RDF as an outdated concept from the 1990s.

The truth, as is often the case, is more nuanced than what either side admits. I’ve worked with both models in real-world applications. I have built ontologies in OWL that support reasoning engines, and I have created property graph schemas in Neo4j that manage millions of traversals per second. They are different tools, and the choice between them is not random, but it is also not as straightforward as many comparisons imply.

This article presents the engineer’s perspective on this comparison. It’s not about marketing. Unlike most comparisons online, this one goes deep enough to be truly helpful, whether you are selecting technology for a project or getting ready for a technical interview.

The Fundamental Difference

Before discussing features, tools, or query languages, you need to grasp the philosophical difference between these two models because everything else follows from it.

RDF was built to represent knowledge on the open web. It assumes that data comes from various sources, needs clear identification across those sources, and must contain enough meaning for machines to understand without human input. Each node in an RDF graph has a URI – a unique identifier that has the same meaning across all systems.

Property graphs are designed to support applications. Their primary assumption is that you control your data, understand its meaning, and require quick traversal. Nodes and relationships have properties (key-value pairs) directly attached, making queries intuitive and efficient.

These models do not offer competing answers to the same question. RDF asks: “How do we represent knowledge in a way that’s universally understandable?” Property graphs ask: “How do we model connected data in a fast and easy way?” Once you recognize that distinction, the rest of this comparison becomes clearer.

The RDF Data Model

Everything Is a Triple

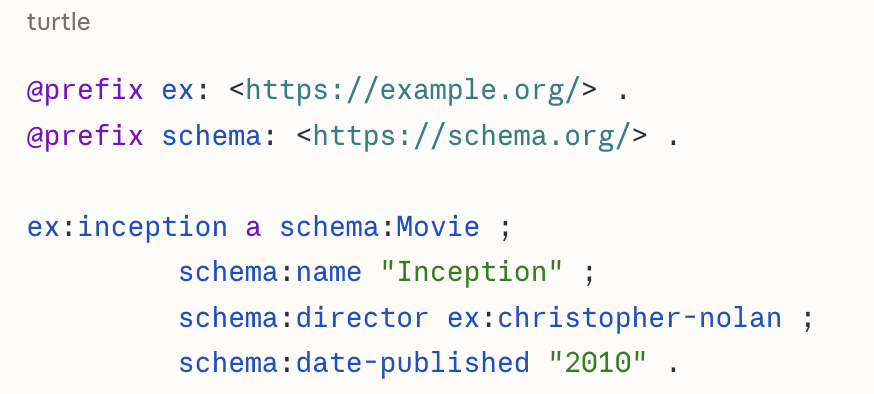

In RDF, every fact is expressed as a triple: Subject – Predicate – Object.

Everything, including entities, attributes, and relationships, is a triple. An attribute like “name” is just another predicate pointing to a literal value. The URIs are important; they are not just IDs but globally unique identifiers that can connect to definitions shared across systems.

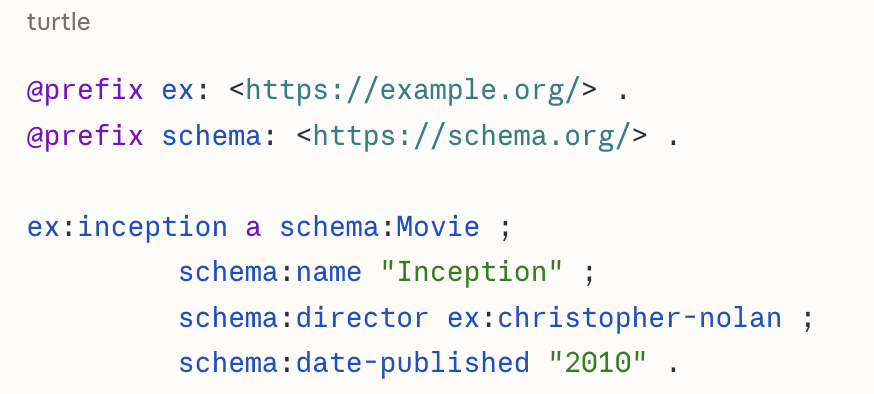

RDF Serialization Formats

RDF data can be serialized in multiple formats, each suited to different contexts:

- Turtle (.ttl): Most human-readable. Best for writing ontologies and manual review. The example above is Turtle.

- N-Triples (.nt): One triple per line with fully expanded URIs. Preferred for data exchange and streaming large datasets.

- JSON-LD (.jsonld): RDF expressed as JSON. The format of choice for web APIs, linked data, and schema.org markup.

- RDF/XML (.rdf): The original W3C serialization. Verbose but still common in older systems.

- TriG / N-Quads: Extensions of Turtle and N-Triples that support named graphs for tracking provenance.

In practice, you’ll write in Turtle, exchange in N-Triples or JSON-LD, and encounter RDF/XML in older systems.

Named Graphs

A named graph lets you separate the physical existence of triples within a knowledge graph. This gives different subsets of data a unique, addressable identity. You can track the origin of data, apply different access controls to various subsets, and keep data from multiple sources without blending them. Named graphs are crucial for enterprise deployments where tracking data origin and managing data are important. RDF stores can contain multiple named graphs, each identified by a URI.

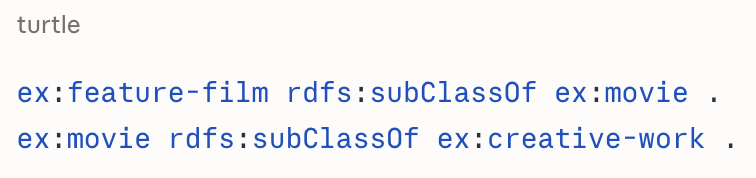

RDFS vs OWL

RDFS (RDF Schema) is the lightweight vocabulary for defining class hierarchies and property types:

If you only need subclass hierarchies and basic property typing, RDFS is enough and supported by every triple store.

OWL adds much more expressivity, including property characteristics like transitivity, symmetry, and inverse relationships, as well as cardinality constraints, equivalent classes, and disjointness axioms. OWL has three profiles (Lite, DL, and Full) with different trade-offs between expressivity and reasoning complexity. Most production ontologies use OWL DL, which offers a balance between power and manageability.

The choice between RDFS and OWL essentially depends on how much semantic support your use case requires. Start with RDFS and add OWL constructs as your domain model needs them.

Data Validation with SHACL

Ontologies define what things mean. SHACL (Shapes Constraint Language) specifies how data should look, including required properties, data types, cardinality constraints, and value ranges. One important distinction to understand is that SHACL is for validation, not enforcement.

Unlike a relational database constraint that blocks invalid data from being entered, SHACL reports violations after the fact. It tells you what is wrong but doesn’t prevent bad data from going into the store. This makes it a valuable tool for auditing and quality assurance, but it means your ingestion pipeline must act on SHACL reports. Think of it as a schema validation layer for RDF, similar to JSON Schema but much more expressive. Most enterprise triple stores support SHACL natively. If you’re building a production knowledge graph without SHACL, you are going without a safety net.

The Property Graph Data Model

Nodes, Edges, and Properties

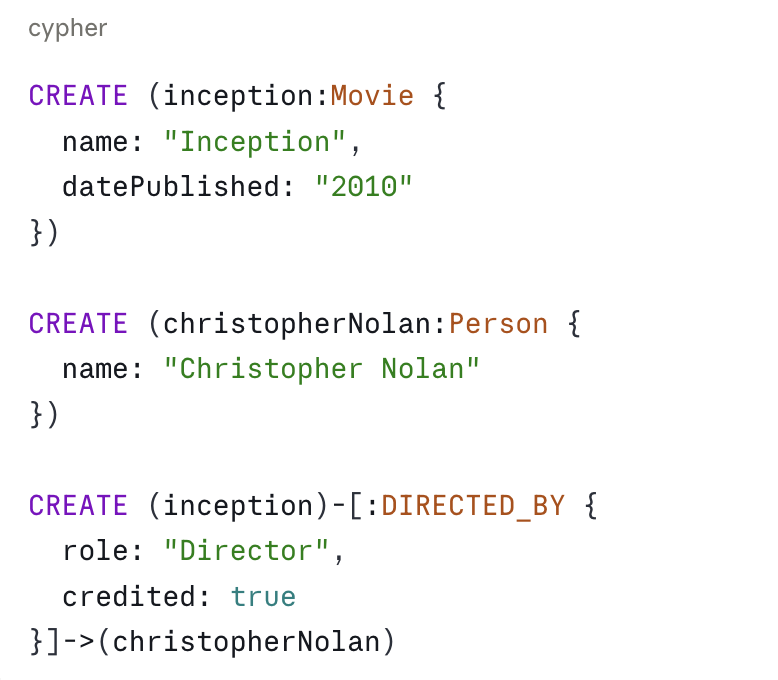

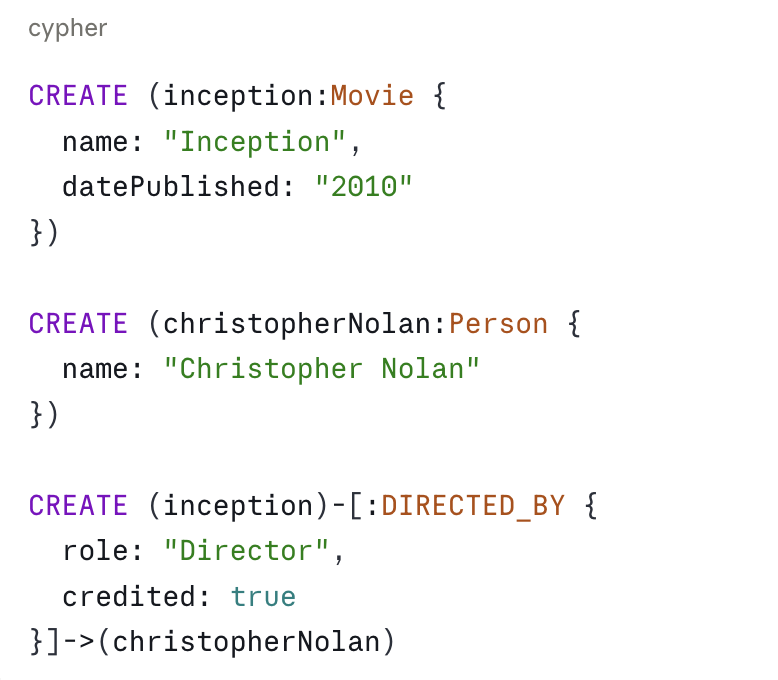

In a property graph (Neo4j Cypher syntax):

Nodes have labels and properties. Relationships are directed, typed, and can also have their own properties. The DIRECTED_BY relationship includes role and credited information. This is one of the most important differences from RDF.

Index-Free Adjacency

Each node in a property graph keeps direct pointers to its neighbouring nodes and relationships. Traversing a relationship means following a pointer in memory; there is no join or index lookup involved. The result is that traversal time does not depend on the overall size of the graph. A 3-hop traversal on a billion-node graph takes the same time as on a million-node graph.

This design choice makes property graph databases fast for operational tasks. It also creates one known weakness: highly connected nodes, or supernodes, with millions of relationships can create long linked lists that may slow down traversal performance and need special handling.

Graph Algorithms

Property graph databases offer native graph algorithm libraries that work directly on the graph structure. Common categories include:

- Centrality: PageRank, betweenness centrality, degree centrality

- Community detection: Louvain, label propagation

- Pathfinding: Dijkstra, A*, shortest path variants

- Similarity: Jaccard similarity, node embeddings

These algorithms are available via Neo4j’s Graph Data Science (GDS) library and have no direct equivalent in RDF triple stores. If your use case involves network analysis, influence scoring, or cluster detection, this is a significant advantage of property graphs.

The Relationship Properties Problem

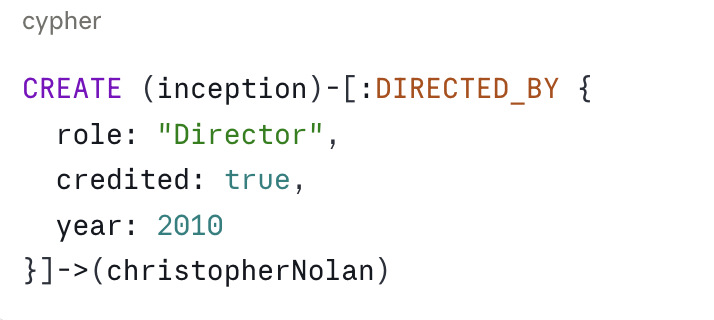

Here’s the situation that confuses teams switching from property graphs to RDF. You are modeling a directing relationship, not just “Christopher Nolan directed Inception” but with metadata: in what capacity, whether he was credited, and the year it was released.

In a property graph, this is simple:

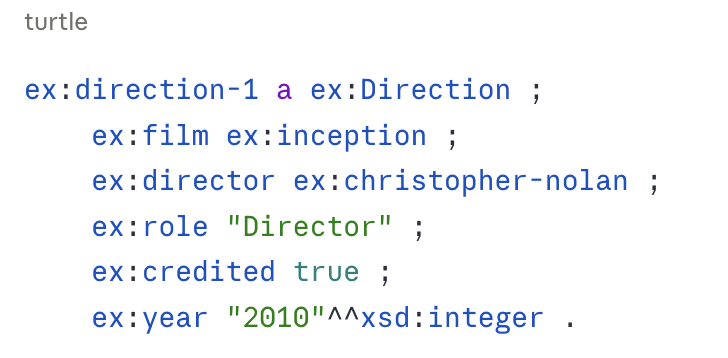

In RDF, relationships are predicates, and predicates cannot have properties. To model the same scenario, you need to use reification – turning the relationship into a node:

You’ve added an intermediate node to hold the relationship metadata. What used to be one hop is now two or three. Your model is more complex, and your queries take longer.

RDF-star (RDF*) partially solves this issue; it allows metadata to be attached directly to triples without an intermediate node. Support is growing among triple stores (GraphDB, Stardog, Apache Jena all support it), but it is not yet universal. For new projects, RDF-star is worth considering. For systems needing wide compatibility with tools, classic reification is still the safer choice.

Query Languages: SPARQL vs Cypher

SPARQL is the W3C standard query language for RDF. Every triple store uses it. It employs triple patterns to describe the structure of the data you need. It supports recursive traversal through property paths and allows querying across multiple endpoints at the same time. The learning curve is steeper than many other query languages. However, because it is standardized, your skills will transfer to any RDF system.

Cypher is Neo4j’s query language. It is one of the easiest graph query languages to read. Its visual pattern matching syntax aligns well with how you think about graphs. Most developers can learn it in hours instead of weeks. The new GQL (Graph Query Language) ISO standard aims to become a vendor-neutral alternative to Cypher for property graph databases.

We will do a complete SPARQL vs. Cypher deep dive, including real queries, common patterns, and performance considerations, in a separate article.

Performance at Scale

Triple Store Architecture

Triple stores typically maintain several indexes over subject-predicate-object combinations to support various query patterns efficiently. Modern stores like GraphDB, Stardog, and Ontotext use B-trees, compressed bitsets, and columnar storage to lower overhead. Triple stores generally perform better on complex semantic queries and worse on deep operational traversals when compared to property graph databases.

When Performance Actually Matters

For deep traversal of operational graphs, such as fraud ring detection, shortest path, or community detection, property graph databases are faster. The index-free adjacency advantage increases with traversal depth.

For complex inference queries, like finding all entities whose inferred type meets a property chain constraint, triple stores with reasoning engines are more suitable. They are designed for this type of semantic query.

For federated queries across multiple sources, SPARQL is the clear winner. There is no equivalent in property graphs.

The basic rule of thumb is this: if your main focus is fast traversal of large operational data, property graphs are faster. If your main focus is complex semantic querying with reasoning, the performance difference is less important, and the semantic capabilities are more crucial.

How Each Model Fits Into Modern AI Architectures

The rise of LLMs has added a new layer to this debate. It’s not just about data modeling preferences anymore. It’s now about which graph model makes your AI system more reliable, explainable, and based on verified facts.

The short answer is that both models have a role, and they serve different purposes.

RDF in AI – The Semantic Grounding Layer

When an LLM needs to answer questions about a specific domain, it needs verified, structured knowledge to reason with instead of guessing. An OWL ontology gives your AI system a formal, machine-readable definition of your domain. Every class, property, and constraint is clearly defined. This allows for three things that property graphs can’t match: fact generation based on inference, explainability that can be audited, and automated checking of semantic consistency through an OWL reasoner. This is where RDF and OWL ontologies shine in AI architectures.

Property Graphs in AI – The GraphRAG Engine

GraphRAG depends on quick, multi-hop traversal of entity relationships to build context for LLMs. Property graphs are designed for this purpose – Neo4j can traverse millions of relationships every second. Neo4j’s built-in Vector Search also allows for semantic similarity search and graph traversal in a single query, which is becoming the standard for production GraphRAG systems.

A concrete example is a financial AI assistant answering the question, “What are the risks associated with this company?”. It needs to:

- Identify the company entity.

- Traverse relationships to subsidiaries, directors, legal entities, and transactions.

- Find related regulatory filings and news items using semantic similarity.

- Assemble all of this into coherent context for the LLM.

Steps 2 and 3 together, graph traversal combined with vector search, are what property graphs with integrated vector indices do extremely well.

The Production Architecture Nobody Talks About

The RDF vs property graphs debate becomes irrelevant at the enterprise AI level. The most advanced systems being built today don’t choose between them – they use a layered architecture:

Layer 1 – OWL/RDF Ontology: This is the formal semantic definition of the domain. It defines what things are, what relationships are valid, and what can be inferred. It is the source of semantic truth.

Layer 2 – Property Graph (Operational): This is the high-performance graph database that holds actual instance data. It is populated from source systems through ETL pipelines and kept in sync with the ontology layer.

Layer 3 – Vector Index: This contains embeddings of unstructured content that are stored alongside or within the property graph. They are used for retrieving semantic similarities.

Layer 4 – LLM: This interprets user queries, manages retrieval across layers 2 and 3, and generates responses based on verified facts from the knowledge graph.

The ontology in Layer 1 determines what the property graph in Layer 2 can include. The property graph provides structured and traversable context for the LLM. This setup is what production-grade, hallucination-resistant AI looks like in knowledge-heavy domains.

The Interoperability Factor

Since every RDF entity has a URI that links to shared vocabularies like schema.org, SKOS, SNOMED CT, and FIBO, RDF data is naturally interoperable across systems and organizations. SPARQL federation takes this further, allowing a single query to pull data from your local store, Wikidata, and DBpedia at the same time. Property graphs don’t have an equivalent; merging two Neo4j databases from different organizations requires custom ETL work. In domains with established shared vocabularies, this interoperability offers a real competitive advantage that often doesn’t appear in feature comparison tables.

The Decision Framework

| Question | Use |

| Need formal reasoning or inference? | RDF + OWL |

| Data needs to integrate with shared standards? | RDF |

| Primary use case is high-performance traversal? | Property Graph |

| Relationships need properties frequently? | Property Graph |

| Team lacks semantic web experience? | Property Graph |

| Building a GraphRAG pipeline? | Both – PG for retrieval, RDF for semantics |

| Need rich data validation? | RDF + SHACL |

The Honest Bottom Line

If you’re starting a new project today with a typical software engineering team to build an operational application, use a property graph. The tools are mature, the query language is user-friendly, and the performance is excellent.

If you’re creating a system where the meaning of data must be clearly defined, shared across organizational boundaries, or automatically processed, RDF and OWL are worth the investment. The learning curve is steeper, but the semantic abilities are truly essential.

If you’re developing a serious enterprise AI system that needs to do both, use a layered architecture. Use RDF for the ontology and semantic truth layer, property graphs for the operational retrieval layer, vector indices for unstructured content, and LLMs on top. It’s more complex, but this is how the most advanced knowledge graph deployments in production actually look.

Next up: From CSV to Knowledge Graph in 60 Minutes, our first hands-on tutorial, where we'll build a real knowledge graph from scratch. No theory, just code.

Missed the earlier articles? Start with What is a Knowledge Graph? or Why Knowledge Graphs Are the Missing Layer in Modern AI.